Edge Computing for Metaverse: How to Reduce Latency at Scale

May, 15 2026

May, 15 2026

Imagine putting on a VR headset and feeling an instant kickback when you swing a virtual sword. Or walking through a digital store where the floor feels solid under your feet, not like floating in a void. This isn't just about graphics; it's about timing. If there is even a slight delay between your movement and what you see, your brain gets confused. That confusion leads to nausea, dizziness, and a total loss of immersion. This is why **edge computing** is the unsung hero behind the metaverse. Without it, large-scale virtual worlds simply wouldn't work.

The problem with traditional cloud computing is distance. Data has to travel from your device to a massive data center and back. Even at the speed of light, that trip takes time. For casual web browsing, milliseconds don't matter. But for the metaverse, they are everything. Edge computing solves this by bringing the processing power closer to you-literally to the cell tower or local server down the street. It cuts out the long haul, delivering the real-time responsiveness that makes virtual reality feel real.

Why Speed Matters More Than Graphics

We often obsess over photorealistic textures and high-resolution avatars. While those look good, they are useless if the system lags. The human body is incredibly sensitive to timing discrepancies. When your eyes tell you you're turning left, but your inner ear senses a half-second delay, you get cybersickness. This condition mimics motion sickness, causing nausea and disorientation. It’s the number one reason people quit VR after their first session.

To prevent this, the interaction needs to be nearly instantaneous. Industry experts suggest that for single-participant interactions in the metaverse, roundtrip latency must stay below 10 milliseconds. Compare that to video calls or cloud gaming, which can tolerate around 100 milliseconds. A 10-millisecond window is razor-thin. It’s less than the blink of an eye. If your network adds even 20 milliseconds of lag, you’ve already doubled the acceptable limit. This is why raw processing power alone isn't enough; location is the critical variable.

What exactly is cybersickness?

Cybersickness is a form of motion sickness caused by a conflict between visual and vestibular (balance) systems. In VR, this happens when latency causes a delay between user movement and visual feedback, leading to symptoms like nausea, headache, and disorientation.

The Physics of Data Travel

You might think fiber optic cables are fast enough. They are, but physics imposes hard limits. Light travels at roughly 200,000 kilometers per second in fiber optics, which is about 40% of the speed of light in a vacuum. Data experiences approximately 5 microseconds of latency for every kilometer it travels. Let’s put that in perspective. If you are in New York and the server is in Los Angeles, that’s about 4,500 kilometers away. The roundtrip data journey takes roughly 50 milliseconds. That is five times slower than the 10-millisecond requirement for immersive metaverse experiences.

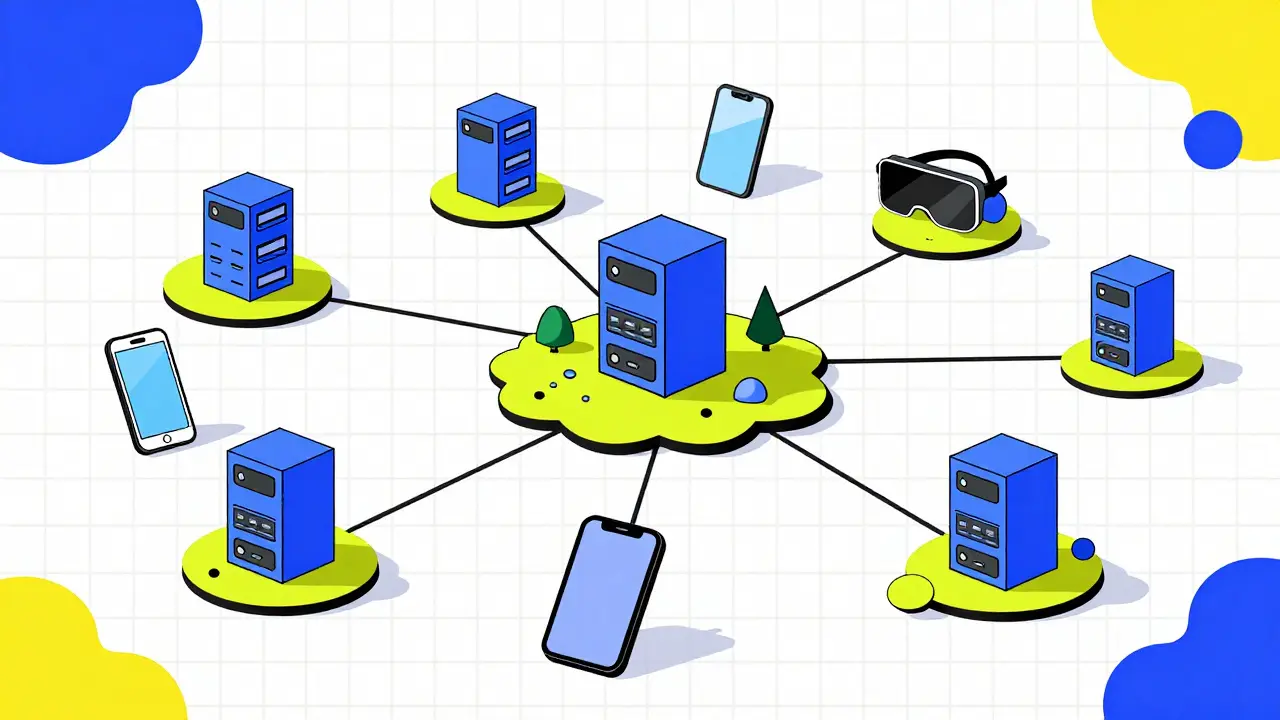

This physical constraint means we cannot rely on centralized mega-data centers for real-time virtual world rendering. No matter how much we upgrade the servers, the distance will always add up. The solution is to stop sending data so far. By placing computational resources at the "edge" of the network-near base stations and local hubs-we drastically reduce the physical distance data must travel. This shift from a network-centric model to a user-centric model is the core promise of Multi-Access Edge Computing (MEC).

How Edge Architecture Differs from Cloud

In a traditional cloud setup, all heavy lifting happens in a few giant facilities. Your VR headset sends input data to the cloud, the cloud processes the physics and graphics, and sends the result back. This creates a bottleneck. Not only is the latency high due to distance, but the network also gets congested with massive amounts of uplink and downlink traffic.

Edge computing flips this script. Instead of scaling up capacity in one place, you scale out across millions of sites. Imagine a hierarchical system where the cloud still handles long-term storage and global synchronization, but the immediate, real-time computations happen locally. Edge servers process specific virtual building calculations or avatar movements right near the user. This distributed approach reduces network congestion and eliminates the roundtrip delay to distant data centers. It allows wearable devices, which often have limited battery and processing power, to offload heavy tasks to nearby powerful servers.

| Feature | Centralized Cloud | Edge Computing (MEC) |

|---|---|---|

| Latency | High (50ms+ for cross-country) | Low (<10ms target) |

| Data Path | Long-distance fiber optics | Local network proximity |

| Scaling Model | Scale Up (bigger servers) | Scale Out (more locations) |

| Network Load | High congestion risk | Distributed load reduction |

| User Experience | Risk of cybersickness | Seamless immersion |

The Role of 5G and Network Infrastructure

Edge computing doesn't work in a vacuum; it needs a robust backbone. This is where 5G networks come into play. Current 5G implementations offer downlink speeds of up to 200 megabits per second, but more importantly, they provide the low-latency, high-throughput connections required for edge nodes to communicate efficiently with end-user devices. Without this wireless infrastructure, the edge servers would be isolated islands.

Telecommunications standards bodies like ETSI have formalized this concept as Multi-Access Edge Computing (MEC). This standardization ensures that different providers can build compatible edge infrastructures. It transitions the architecture from rigid, centralized models to flexible, decentralized ones. For firms offering augmented reality (AR) and virtual reality (VR), reconfiguring their computing power to leverage these edge nodes is no longer optional-it’s essential. Centralized provision, regardless of optimization, simply cannot meet the sub-10ms constraints required for shared, persistent virtual environments.

Scaling Challenges and Energy Efficiency

Building a metaverse that supports billions of users requires solving massive scaling challenges. In the past, deploying edge systems was cost-prohibitive and energy-intensive. However, recent advances in data systems have made edge deployment far more feasible. Modern edge servers are highly capable, cost-effective, and energy-efficient. They can handle computation-intensive tasks that previously required supercomputers.

Furthermore, dynamic resource allocation and edge intelligence allow these systems to adapt in real-time. If a particular virtual zone becomes crowded, the edge network can dynamically allocate more processing power to that specific node. This prevents bottlenecks and ensures consistent performance. The arxiv academic surveys on Mobile Edge Computing highlight that this hybrid approach-combining cloud layers for simulation with edge layers for immediate processing-eliminates fragmentation and computational bottlenecks. It addresses the three primary challenges of metaverse systems: latency, privacy, and energy consumption.

Real-World Implications Beyond Gaming

While gaming gets the most attention, the demand for low-latency services extends across all sectors. Industrial IoT applications require real-time monitoring and control. Remote surgery depends on haptic feedback that cannot tolerate lag. Collaborative design tools need instant synchronization for teams working globally. Edge computing provides the foundational infrastructure for these diverse use cases. It enables a fleet of decentralized local edge data centers to distribute computational load, ensuring that whether you are playing a game or conducting a remote operation, the response is immediate and reliable.

The transition to edge computing represents a fundamental shift in how we organize digital infrastructure. It moves us from a model where data travels far to reach processing power, to one where processing power travels close to the data source. For the metaverse to thrive, this shift is not just an enhancement-it is the prerequisite. As we move toward 2026 and beyond, the success of immersive technologies will depend entirely on our ability to deploy these edge nodes at scale, maintaining the sub-10ms latency that keeps users engaged, comfortable, and present.

What is the difference between edge computing and cloud computing?

Cloud computing processes data in centralized, distant data centers, while edge computing processes data locally, near the user or device. Edge computing significantly reduces latency by minimizing the distance data must travel, making it ideal for real-time applications like the metaverse.

Why is 10 milliseconds considered the latency threshold for the metaverse?

The human brain detects delays greater than 10-20 milliseconds as unnatural, leading to sensory conflicts. Exceeding this threshold causes cybersickness (nausea, dizziness) and breaks immersion, making real-time interaction feel disjointed.

How does 5G support edge computing?

5G provides the high-bandwidth, low-latency connectivity required for edge devices to communicate instantly with local edge servers. It enables the rapid data transfer necessary for real-time AR/VR experiences without relying on slower, long-distance networks.

Can existing data centers handle metaverse traffic?

No, traditional centralized data centers suffer from inherent latency due to physical distance. To support the metaverse, infrastructure must shift to decentralized edge nodes located in close proximity to users to meet sub-10ms latency requirements.

What is Multi-Access Edge Computing (MEC)?

MEC is a standardized IT environment with cloud-computing capabilities at the edge of a network. Defined by ETSI, it allows developers to run applications locally, reducing latency and improving bandwidth efficiency for connected devices.